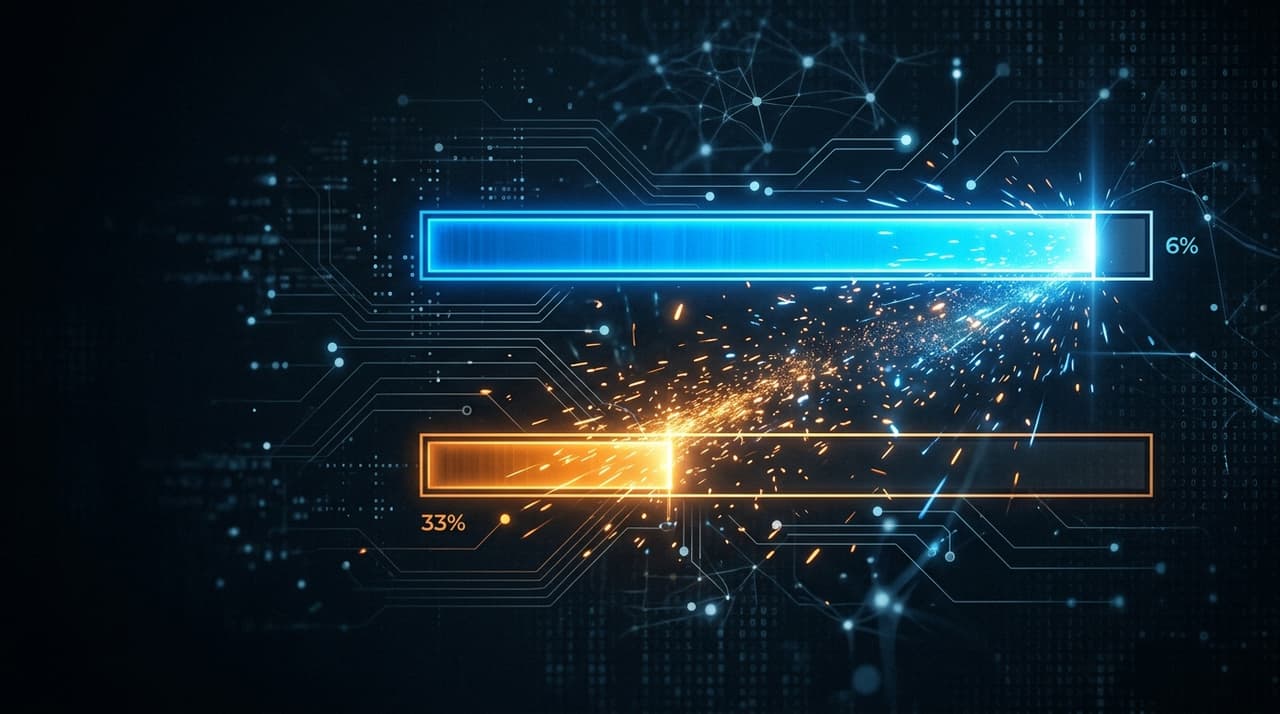

94% Capable. 33% Deployed. The Gap That Explains Everything.

TL;DR

- Anthropic's new exposure metric reveals a 61-percentage-point gap between theoretical AI capability (94% in Computer and Math roles) and actual deployment (33% coverage)

- No systematic unemployment increase detected for highly exposed workers, but a 14% reduction in job-finding rates for 22–25 year olds signals the impact shows up in hiring pipelines, not pink slips

- The bottleneck is not technology. It is deployment: product architecture, governance infrastructure, and organisational design

Ninety-four per cent. That is how much of the work in Computer and Math occupations AI can theoretically handle, according to Anthropic's new labour market exposure research.

Thirty-three per cent. That is how much AI actually handles.

The gap between those numbers is 61 percentage points. It is the single most useful data point in the AI-and-jobs conversation right now, because it explains why both sides of the debate are simultaneously right and wrong.

The doomers are right that AI capability is enormous. Ninety-four per cent coverage across an entire occupational category is not a rounding error. The dismissers are right that mass displacement has not materialised. Unemployment among highly exposed workers is, in Anthropic's words, "indistinguishable from zero."

Both camps are arguing about capability. The actual story is about deployment.

I've spent the last four months building two production SaaS platforms as a solo operator, shipping 50+ AI features each across six LLMs. I operate near the theoretical ceiling that Anthropic's data describes. That experience gives me a specific vantage point: I know what it looks like when the gap closes, because I closed it. And I know the gap is not about the models.

What Anthropic actually measured

Previous studies (notably Eloundou et al.'s "GPTs are GPTs" paper) assessed what AI could theoretically do. They rated tasks by whether an LLM could complete them, and aggregated those ratings into occupational exposure scores. Those scores told you about potential. They told you nothing about reality.

Anthropic's innovation is combining theoretical capability ratings with actual usage data from their own systems. They weighted fully automated implementations at double the rate of augmentative uses. They matched this against O*NET's database of 800+ occupations and validated it against the Current Population Survey and BLS employment projections.

The result: 97% of observed Claude usage involves theoretically feasible tasks, with tasks rated as fully automatable (β=1) accounting for 68% of usage. People are using AI for things AI can do. They're just not using it nearly as much as they could.

The authors deserve credit for intellectual honesty. They explicitly note that previous technology-shock predictions (offshorability studies, industrial robot employment effects, China trade shock estimates) failed to materialise at predicted scale. This is not a team making breathless claims. Their headline finding, that actual deployment trails capability by a factor of three, is presented with full awareness that forecasting labour disruption has a poor track record.

That caution makes the findings more credible, not less.

I saw the identical gap at a smaller scale. At Cotality, I managed a flagship platform with thousands of enterprise seats across Tier 1 Australian banks. We shipped AI features that worked technically. Adoption sat in the single digits. Not because the capability was missing. Because the features demanded that users break their workflows to accommodate AI, rather than the AI accommodating them. Anthropic is measuring at the macro level what I watched happen at the product level: the gap between what AI can do and what people actually do with it is a product and organisational problem, not a technology problem.

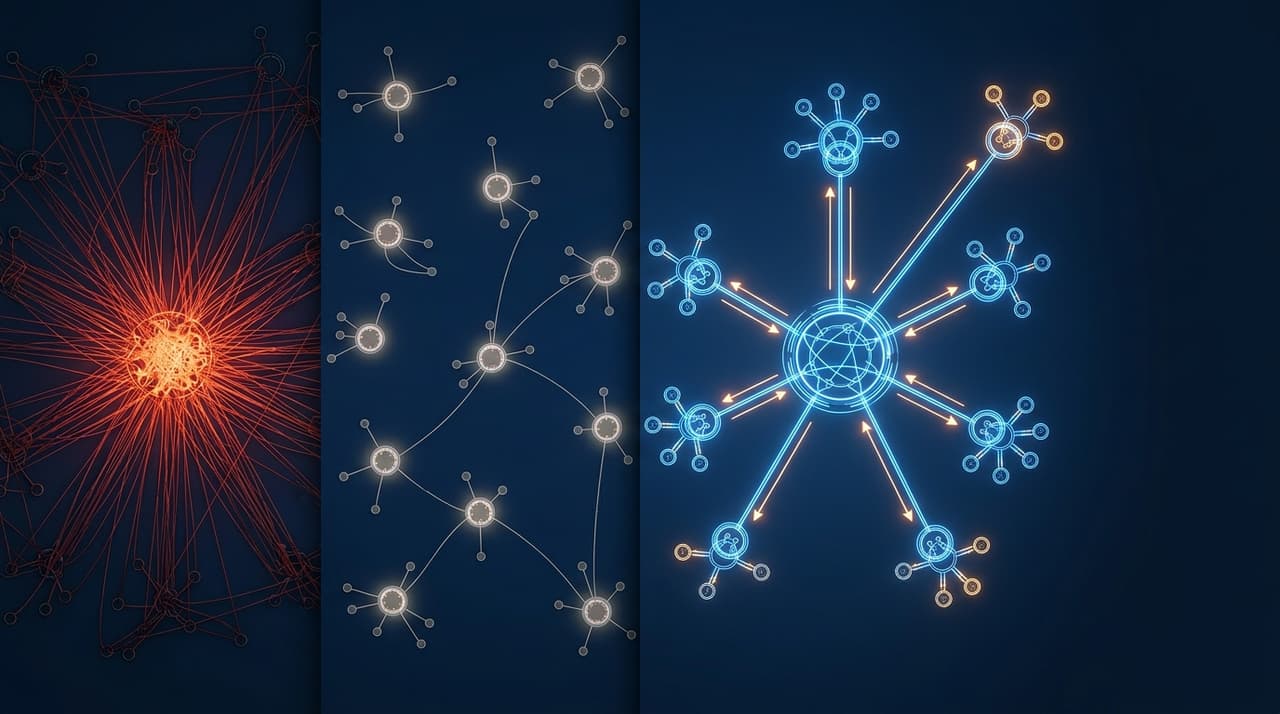

Why is there a 61-point gap between AI capability and deployment?

If the models are 94% capable but only 33% deployed, something structural is preventing adoption. Three forces explain the gap, and each connects to work I've published on this site over the past year.

The destination problem

Most enterprise AI is deployed as chatbots and copilots: destination tools that require users to stop what they're doing, switch context, craft a prompt, wait for a response, evaluate the output, and carry the result back into their actual workflow.

That six-step detour is why the 33% number looks the way it does. The capability exists, but the friction of accessing it suppresses sustained usage. Computer Programmers show 75% coverage partly because coding tools like GitHub Copilot are inline, not destination: the AI meets the developer inside the editor, in the flow of work. Data Entry Keyers show 67% because data entry is a structured, repetitive task that maps cleanly to automation.

The occupations with high theoretical capability but low actual coverage are the ones where AI sits in a separate tab, behind a login, requiring a context switch. The 33% is a product architecture number, not a technology number.

The governance bottleneck

Regulated industries (banking, legal, healthcare) sit in an awkward position. Their workers handle high-value knowledge work with high theoretical AI exposure. Their compliance environments make rapid deployment risky. A hallucinated property valuation delivered to a mortgage broker at a Tier 1 bank is not a minor bug. It is a regulatory incident.

This is not irrational caution. It is entirely legitimate. The question is whether governance frameworks are calibrated to enable deployment at appropriate risk levels, or whether they're blanket bureaucracy that blocks deployment entirely.

At Cotality, I built risk-tiered AI governance for AFSL-regulated clients. Features were classified by risk level. Low-risk features (draft suggestions with human review) shipped fast. High-risk features (autonomous outputs touching financial decisions) went through graduated rollout with banking partners. We scaled from zero to ten production AI features serving AFSL-holding clients with zero regulatory incidents. Governance was the deployment enabler, not the blocker.

The 61-point gap will not close in regulated industries by moving fast and breaking things. It will close by building governance infrastructure that makes deployment safe, auditable, and incremental.

The org chart problem

Deploying AI at its theoretical capability requires reorganising workflows around AI. Not bolting AI onto existing processes, but redesigning the processes themselves. That is a team design question, an incentive design question, and a pricing model question.

Consider the perverse incentive in per-seat SaaS pricing. When your customer successfully deploys AI, they need fewer human seats. Fewer seats means lower revenue for the vendor. The vendor's business model structurally disincentivises helping customers close the capability-deployment gap. You're being rewarded for your customer's inefficiency.

And internally, organisations face the problem I described in the team-of-one thesis: deploying AI at full capability means rethinking headcount assumptions, management layers, and what "growth" looks like. That requires leadership willing to redesign the org chart, not just approve an AI budget.

The 33% is what you get when organisations add AI to existing structures. Closing the gap requires redesigning the structures themselves.

The hiring shift nobody is tracking

Anthropic's most consequential finding is not about firings. It is about hiring.

No systematic increase in unemployment was detected for highly exposed workers since late 2022. The researchers' framework is sensitive enough to detect differential unemployment increases of around one percentage point. It found nothing.

Where the signal does appear: a 14% reduction in job-finding rates for workers aged 22–25 entering exposed occupations in the post-ChatGPT period. The authors flag this as barely statistically significant, and I'll extend them the same honesty here. This is a signal, not a proven conclusion. It warrants attention, not certainty.

But it aligns with what I've been watching in the market. LinkedIn scrapped its Associate Product Manager program entirely, replacing it with a "Full Stack Builder" track. The translation layer between business and engineering is compressing. When I wrote about hiring for autonomy instead of capacity, the argument was directional. Anthropic's data gives it the first empirical anchor.

The impact is not mass layoffs. It is a quiet contraction in entry-level hiring for knowledge work. Organisations are not firing experienced workers. They are choosing not to backfill junior roles, absorbing the capacity through AI augmentation instead. The 14% shows up in hiring pipelines, not in pink slips.

The demographic profile of exposed workers makes this more pointed. Compared to workers with zero AI exposure, highly exposed workers are 16 percentage points more likely to be female, 11 percentage points more likely to be white, nearly twice as likely to be Asian, earn 47% more on average, and hold graduate degrees at 3.9 times the rate (17.4% versus 4.5%). Thirty per cent of workers have zero exposure: cooks, motorcycle mechanics, lifeguards, bartenders, dishwashers.

This is not an equal-opportunity disruption. The workers most exposed are experienced, educated, and well-compensated. The knowledge-work middle class.

If I were rebuilding my 21-person product organisation at Cotality from scratch today, the team would look different. Not necessarily smaller in headcount (regulated enterprise environments still need human judgment at scale), but fundamentally different in composition. Fewer coordination roles. Fewer translation roles. More autonomous operators with the builder skillset and governance fluency to direct AI systems, not just use them.

What closes the gap

The gap will close. Three forces drive it.

Agentic AI closes the usage gap. Copilots require human initiative. You have to remember the AI exists, switch to it, prompt it, and evaluate its output. Agents operate autonomously within defined boundaries, surfacing only exceptions for human review. The shift from chatbots to multi-agent systems that execute in the background is the deployment mechanism that converts theoretical capability into actual coverage. When AI stops waiting for you to ask and starts doing the work, the 33% moves.

Governance infrastructure makes deployment safe. Regulated sectors will not close the gap by ignoring compliance requirements. They will close it by building risk-tiered governance frameworks that make autonomous AI deployment auditable, incremental, and compliant. The governance work I did at Cotality, and that I've documented in the risk-tiered framework, is a template: classify features by risk, graduate rollout by trust level, monitor outputs continuously.

Pricing and org redesign makes deployment rational. Per-seat pricing punishes AI deployment. Outcome-based and usage-based pricing rewards it. Until the economic incentives align, vendors will under-invest in helping customers close the gap, and customers will under-deploy relative to capability. The pricing model is a deployment lever, not just a revenue model.

Anthropic's data tells you what happens as the gap closes: for every 10 percentage point increase in actual coverage, BLS employment growth projections decline by 0.6 percentage points. Not a cliff. A gradient. The pace of closure matters as much as the fact of it.

The honest position

I've written more than fifteen articles on this site about the opportunities AI creates for product builders, for organisations willing to restructure, for individuals who pick up the tools and ship. I still believe those arguments. Anthropic's data validates the core claim: the capability is real, and the people who deploy it capture enormous leverage.

This article adds the distributional reality that the opportunity framing alone does not cover.

The workers most exposed are not abstract economic units in a BLS projection. They are disproportionately female, disproportionately educated, disproportionately well-compensated. They are the people who built careers on knowledge work. The 14% hiring signal, fragile as it is, suggests the path into those careers is already narrowing for the next generation.

The gap should close. The productivity gains are real and large. But how it closes is a design decision, not an inevitability. Gradually, through attrition, reskilling, and role evolution, is manageable. Abruptly, through displacement without transition, is not. The pace depends on the governance, organisational design, and policy choices being made right now.

Everything I've written about governance, team design, and builder identity is, viewed through this lens, about closing the gap responsibly. Not pretending it does not exist. Not pretending it should not close. Insisting that how it closes is something we can shape, and that the people shaping it should understand the technology well enough to make informed choices.

That requires leaders who build, not just strategise. It requires governance that enables, not just restricts. And it requires an honest accounting of who bears the cost when the 33% moves toward the 94%.

Frequently Asked Questions

Does this research mean AI will not cause job losses?

No. The 0.6 percentage point employment growth decline per 10 percentage point increase in coverage is real economic pressure. Anthropic's finding is that the impact has not shown up as unemployment yet, because deployment lags capability by a wide margin. As the gap closes, through agentic AI, better governance, and organisational redesign, the employment effects will materialise. The pattern looks more like slower hiring and role consolidation than mass layoffs, but the pressure is directionally significant.

Why is the capability-deployment gap so large?

Three structural factors: product architecture (most AI is deployed as destination tools requiring workflow disruption), governance constraints (regulated industries face legitimate compliance barriers), and organisational inertia (deploying AI at full capability requires redesigning team structures and business models, not just approving tool licenses). Closing it requires architectural and organisational change, not better models.

Are junior roles actually disappearing?

The 14% job-finding rate reduction for 22–25 year olds is suggestive but statistically fragile. LinkedIn scrapping its APM program and the broader shift toward autonomous builder roles provide corroborating signals. The honest answer: probably, gradually, in specific knowledge-work occupations. Organisations that historically hired juniors for execution capacity are absorbing that capacity through AI instead of hiring. The training-ground function of junior roles needs deliberate redesign, or the talent pipeline dries up.

How does this research apply outside the US?

Anthropic's study uses US data (Current Population Survey, BLS projections, O*NET). The theoretical capability scores are broadly applicable since they describe task characteristics, not geography. The deployment rates will vary by regulatory environment, AI adoption culture, and industry mix. Australian regulated sectors (banking, financial services, property) face similar dynamics to US equivalents: high theoretical exposure constrained by compliance requirements, with governance infrastructure as the key enabler of responsible deployment.

Logan Lincoln

Product executive and AI builder based in Brisbane, Australia. Nine years in regulated B2B SaaS, currently shipping production AI platforms. Written from experience enterprise AI at Cotality.