AI Governance Without Bureaucracy: A Framework That Ships

TL;DR

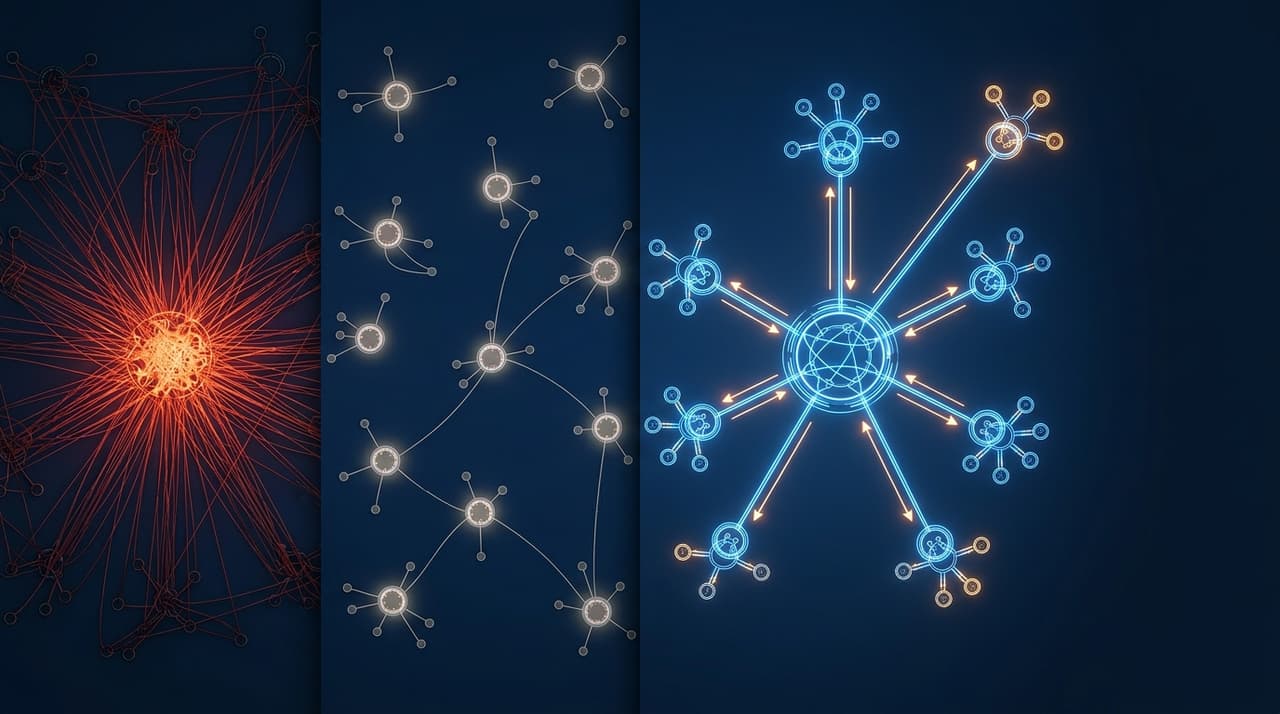

- Most AI governance frameworks are either toothless (a checklist nobody reads) or paralysing (a committee that blocks everything), and both fail the same way: AI features don't ship, or they ship without real oversight

- The fix is risk-tiered governance: classify AI features by the damage a failure could cause, then match governance rigour to the risk tier

- I built this framework at Cotality, shipping AI features to AFSL-regulated Tier 1 banks with no AI-related regulatory incidents during my tenure, and it's the same model I use for everything I build now

AI governance has a credibility problem.

At one end, you have "governance theatre": a PDF that says "we are committed to responsible AI" and a quarterly review meeting where everyone nods and nothing changes. The AI features ship without meaningful oversight. When something goes wrong, the PDF doesn't help.

At the other end, you have "governance as bottleneck": every AI feature needs legal review, compliance sign-off, ethics committee approval, and a risk assessment that takes longer than building the feature. The governance process works exactly once, at which point the engineering team finds ways to avoid triggering it. AI features either don't ship or ship through side doors that bypass the process entirely.

Both failure modes have the same root cause: the governance isn't calibrated to the risk. A low-risk AI feature (generating a marketing tagline) gets the same treatment as a high-risk one (generating a property valuation used in a lending decision). That's either too little governance for the high-risk feature or too much governance for the low-risk one. Usually both.

How does risk-tiered AI governance work?

Not all AI features carry the same risk. A hallucinated product description is embarrassing. A hallucinated financial figure in a report sent to a bank is a regulatory incident. Treating these the same, either by ignoring the distinction or by applying maximum governance to both, is organisationally irrational.

The principle: match governance rigour to the damage a failure would cause.

At Cotality, I established an AI Governance Working Group and built a risk classification framework for exactly this purpose. The company's clients included AFSL-holding institutions (CBA, NAB, ANZ). A property valuation displayed to a mortgage broker who uses it to assess a loan application is a fundamentally different risk profile from an AI-generated neighbourhood description on a consumer portal.

We classified every AI feature into risk tiers before a line of code was written, and the tier determined the governance path.

The three tiers

Tier 1: Low risk (ship fast, monitor)

Characteristics: The AI output is supplementary, not authoritative. A human always sees the output before any decision is made. The failure mode is inconvenience or mild quality degradation, not financial or legal harm.

Examples: AI-generated marketing copy, content summaries, search result ranking, internal-facing recommendations, draft emails.

Governance requirement: Standard code review. Basic eval suite (20 to 50 test cases). Output monitoring with quality alerts. No additional approval required.

Rationale: The cost of a bad output is low and recoverable. The user can ignore, edit, or override the output. Adding heavyweight governance to these features creates process overhead without proportional risk reduction.

Tier 2: Medium risk (structured review, human-in-the-loop)

Characteristics: The AI output influences decisions with financial, operational, or reputational impact. A failure could cause material harm, but human review is part of the workflow. The AI assists rather than decides.

Examples: AI-generated property descriptions used by agents, AI-assisted data extraction from legal documents, AI-powered customer communications, automated data classification.

Governance requirement: Expanded eval suite (100+ test cases with adversarial examples). Human-in-the-loop mandatory at launch, with a defined process for escalating to Tier 3 review if the feature scope expands. Quarterly accuracy review. Documented rollback plan.

Rationale: The output matters and reaches external parties. Human review catches most failures, but the governance process ensures the human review is actually happening (not being rubber-stamped) and that quality is tracked systematically.

Tier 3: High risk (full governance review, continuous audit)

Characteristics: The AI output directly informs decisions with significant financial, legal, or regulatory consequences. A failure could trigger regulatory scrutiny, financial loss, or reputational damage to the company or its clients. The output may be consumed by regulated entities.

Examples: AI-generated property valuations consumed by banks, AI-driven risk assessments, automated compliance checks, AI outputs that feed into auditable financial processes.

Governance requirement: Full governance working group review before development begins. Comprehensive eval suite (500+ test cases including edge cases and adversarial inputs). Mandatory human audit of 100% of outputs at launch, transitioning to spot-check architecture only after sustained accuracy above 99%. Continuous regression monitoring. Incident response plan. Quarterly external review. Documented decision trail for every output.

Rationale: The damage from a failure justifies the governance cost. In a regulated environment, the governance isn't optional. It's the price of operating. But by limiting this level of scrutiny to features that genuinely warrant it, you protect the high-risk features without paralysing the low-risk ones.

Product owns governance, not legal

This is the structural decision that makes the framework operational.

At most organisations, AI governance lives with legal or compliance. This feels natural: governance is about risk, legal manages risk, therefore legal should own governance.

It doesn't work. Legal and compliance can identify risks. They can define requirements. They can review and approve. They shouldn't own the framework alone, because they don't own the decisions that the framework governs: what to build, how to build it, how to price it, how to deploy it, and how to monitor it.

At Cotality, product chaired the AI Governance Working Group. Legal, compliance, engineering, and data science were critical members. But the product leader held accountability because the product leader is the one who decides what gets built, what tier it falls into, and whether the governance requirements are met before shipping.

This isn't about sidelining legal. It's about accountability. If the product leader has to stand in front of the governance group and defend the risk classification, the deployment plan, and the monitoring approach before shipping, the governance is real. If legal has to approve a feature they don't fully understand against criteria they didn't help define, the governance is a signature on a form.

Unit economics before build

Every AI feature I shipped required an investment case before entering the development backlog. Not a business case in the enterprise sense (50-page document, six months of analysis). A one-page analysis:

- Inference cost per request. What model, what token volume, what cost at projected scale?

- Expected usage volume. How many requests per day/week/month based on the user base and the task frequency?

- Pricing impact. Does this feature justify a price increase? Is it included in the existing tier? Does it need metered billing?

- Margin analysis. At projected usage, what's the gross margin? Does the margin improve or degrade with scale?

This prevented the "add AI to everything" trap. When every AI feature has to show its unit economics before a line of code is written, the features that get built are the ones that make commercial sense, not just the ones that make impressive demos.

The one-page investment case is also a governance artifact. It forces the product team to think about the AI feature as a business decision, not just a technical capability. Features that can't justify their inference cost don't get built. Features that can justify their cost but fall in Tier 3 get the governance scrutiny that their risk warrants.

The governance decision tree

When a new AI feature is proposed, walk through these questions in order:

1. What happens if the AI is wrong?

- The user is mildly inconvenienced → Tier 1

- The user makes a worse decision → Tier 2

- The user or a downstream party suffers financial, legal, or regulatory harm → Tier 3

2. Who sees the output?

- Only internal users → Lower tier

- External customers → Higher tier

- Regulated entities → Tier 3

3. Is there a human in the loop?

- Human reviews every output before action → Can be one tier lower

- Human reviews samples → Stays at current tier

- No human review → Must be one tier higher

4. Is the output auditable?

- Full decision trail (input, model, output, timestamp) → Governance requirement met

- Partial audit trail → Requires remediation before launch

- No audit trail → Cannot ship

This tree takes 15 minutes to walk through. It replaces weeks of ambiguous risk assessment with a structured, repeatable classification. Any product manager can apply it. Any governance reviewer can verify it.

What this looks like in practice

At Cotality, we went from zero to ten production AI features serving regulated clients. No AI-related regulatory incidents during my tenure. The governance framework wasn't the reason we were slow (we weren't slow). It was the reason we could be fast with confidence.

A Tier 1 feature (AI-generated content summaries for internal use) went from concept to production in weeks. Standard code review, basic eval suite, shipping.

A Tier 2 feature (AI-assisted property descriptions for agent-facing platforms) went from concept to production in roughly one quarter. Expanded eval suite, human-in-the-loop workflow, accuracy monitoring, rollback plan.

A Tier 3 feature (AI-generated analytics consumed by banking clients) took proportionally longer. Full governance review, comprehensive eval suite, 100% human audit at launch, continuous monitoring, incident response documentation.

The timeline difference is proportional to the risk, which is exactly how it should work. Fast where the risk is low. Thorough where the risk is high. Nothing is blocked unnecessarily. Everything that needs scrutiny gets it.

Governance that ships is governance that earns trust, with engineering, with leadership, with clients, and with regulators. Governance that blocks earns resentment and gets circumvented.

Build the framework that earns trust. I walk through the complete governance framework in the handbook, including the decision tree, tier definitions, and working group structure.

Frequently Asked Questions

How do you prevent teams from gaming the tier classification to avoid governance?

Two mechanisms. First, the product leader signs off on the tier classification, not the team that's building the feature. This separates the incentive (ship fast) from the classification (assess honestly). Second, conduct quarterly audits of tier classifications against actual feature behaviour. If a feature classified as Tier 1 is actually producing outputs that influence financial decisions, reclassify it and escalate.

What about AI features that span multiple risk tiers depending on the use case?

Classify to the highest applicable tier. If an AI-generated summary is Tier 1 when viewed internally but Tier 2 when shared with clients, the feature is Tier 2. The highest-risk use case determines the governance path. You can always add permissive paths for low-risk use cases within the higher governance framework, but you can't add restrictions retroactively without disrupting users.

How do you handle model updates that might change the risk profile?

Every model update (model version change, prompt modification, training data refresh) triggers a re-evaluation against the existing eval suite. If the eval suite passes, the update ships. If it doesn't, the update is blocked until the regression is resolved. For Tier 3 features, model updates also trigger a governance notification so the working group is aware. This is automated, not manual, because manual notification processes get forgotten.

Logan Lincoln

Product executive and AI builder based in Brisbane, Australia. Nine years in regulated B2B SaaS, currently shipping production AI platforms. Written from experience AI governance at Cotality.