Enterprise AI Adoption Playbook: Why Seats Go Unused

TL;DR

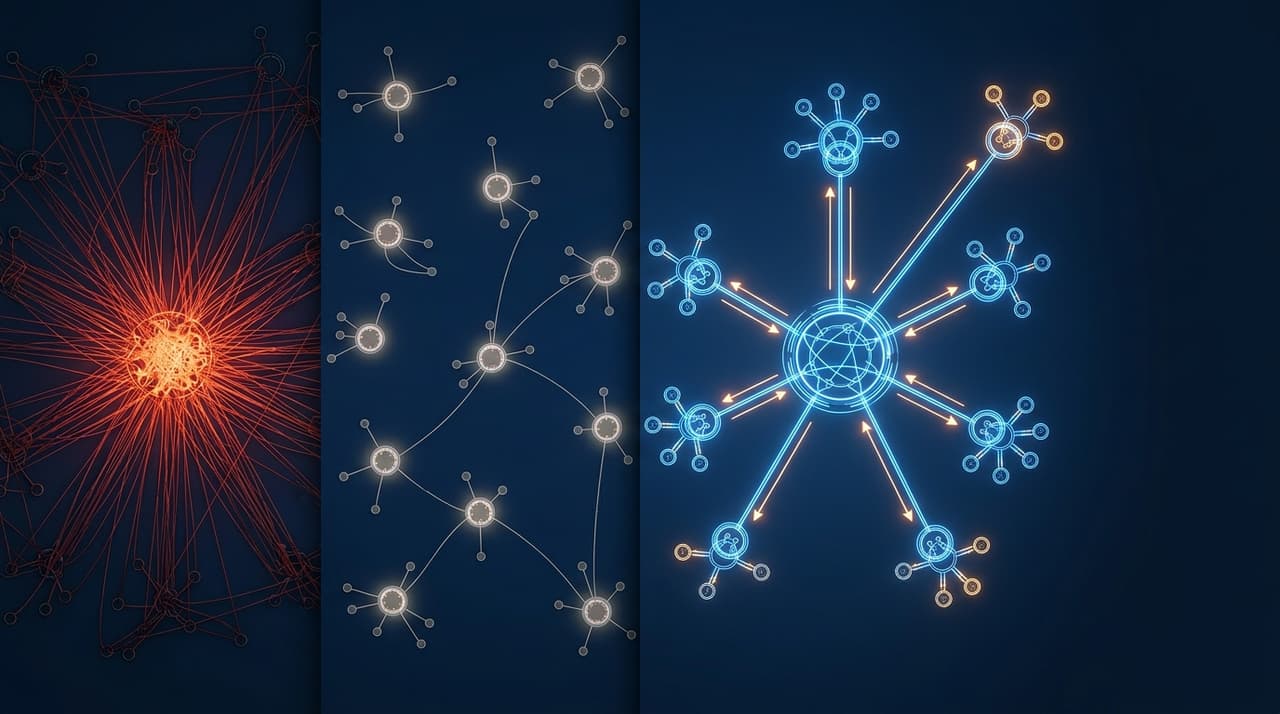

- Enterprise AI adoption fails at the "last mile": features that work technically but sit unused because they weren't designed for how people actually work

- Utilisation tracking (MAU-to-seat ratio) is the metric that predicts churn, not feature count or capability benchmarks

- Co-designing AI features with enterprise clients, rather than shipping and hoping, is the difference between 10% adoption and 65%+ adoption

At Cotality, I managed a flagship platform with thousands of active enterprise seats across CBA, NAB, ANZ, and hundreds of SMB customers. When we started rolling out AI features, the technology worked. The adoption didn't.

We'd ship an AI feature. The demo would impress stakeholders. The release notes would go out. Usage reports two months later would show single-digit adoption. Not because the feature was bad. Because we'd built it for a user who didn't exist: someone eager to change their workflow to accommodate a new AI capability.

Real users don't do that. Real users have muscle memory, ingrained workflows, and a healthy scepticism about anything that claims to save them time. They've been promised productivity gains before. They've been burned before. Your AI feature isn't competing with the status quo's quality. It's competing with the status quo's comfort.

This is the adoption problem, and it kills more AI initiatives than technical failure ever will.

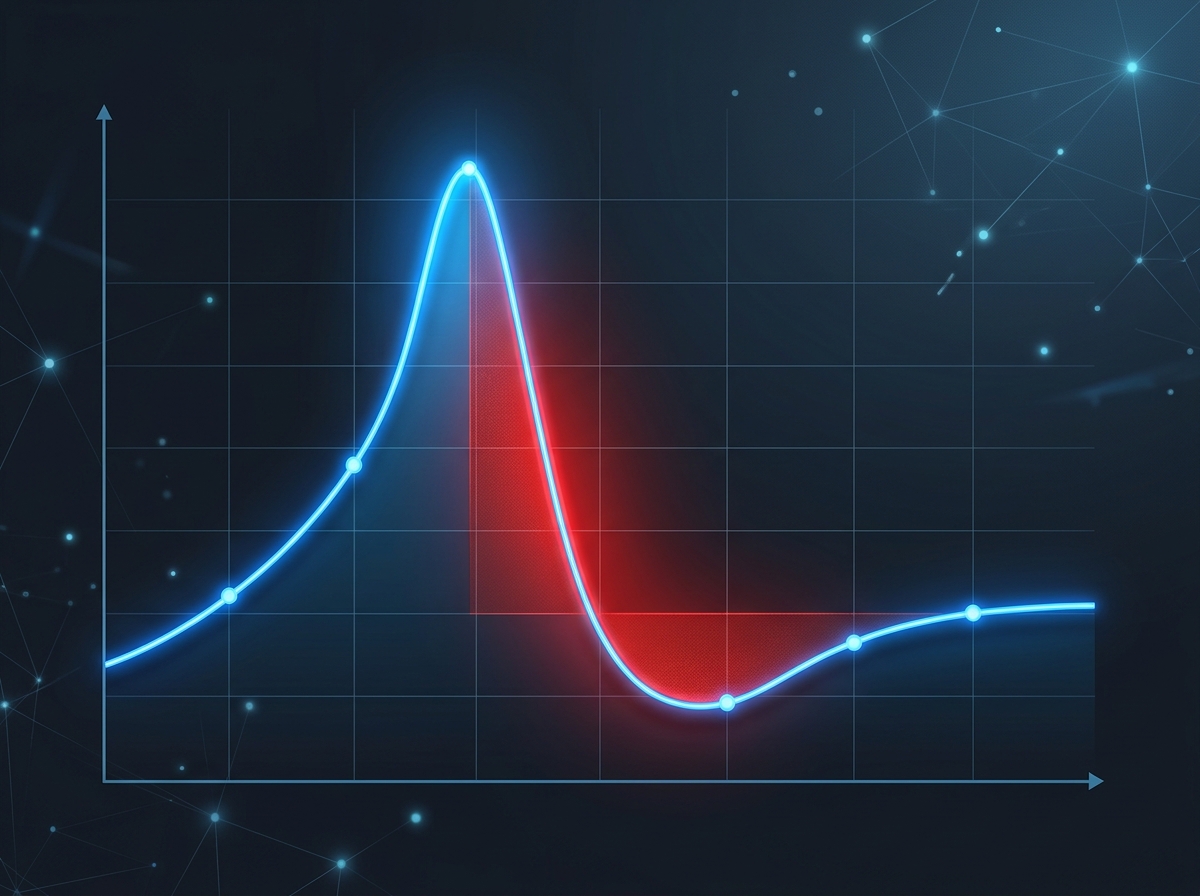

How do you measure whether enterprise AI adoption is working?

Most enterprise product teams track feature adoption as a secondary metric. They track MAU, revenue, NPS, support tickets. Adoption of specific features gets buried in a product analytics dashboard that someone checks monthly.

At Cotality, I made utilisation the north star. Not MAU in isolation, but MAU-to-seat ratio: of the seats we've sold, what percentage are actually active?

When I took over the platform, utilisation sat below industry benchmarks. A significant share of licensed seats were essentially dormant. Those dormant seats aren't just wasted potential. They're churn signals. An enterprise client paying for 200 seats but only using 120 will, at renewal time, either downsize or leave. Seats gathering dust are a ticking commercial time bomb.

We pushed utilisation above target benchmarks and, in parallel, drove material churn reduction (more than 30%). Those numbers are connected. The single best predictor of enterprise renewal isn't NPS. It isn't customer satisfaction scores. It's whether the people who have access to the product actually use it regularly.

AI features that don't get adopted make utilisation worse, not better. You've added complexity to the product without adding usage. The feature appears in the navigation, in the onboarding flow, in the help documentation. It takes up space. It doesn't earn its keep.

Why "launch and hope" fails for AI

Traditional feature launches follow a pattern. Ship the feature. Write release notes. Maybe run a webinar. Wait for organic adoption. For incremental improvements to existing workflows (a faster search, a better export, a redesigned dashboard), this works well enough. Users notice the improvement and adopt it naturally.

AI features break this pattern for three reasons.

Trust deficit. Users don't trust AI output by default. They've seen ChatGPT hallucinate. They've read the headlines. Asking them to trust AI-generated content in a professional context (a property valuation, a risk assessment, a client report) requires earning that trust incrementally. A webinar doesn't build trust. Consistent, verifiable accuracy builds trust.

Workflow disruption. Most AI features change how work gets done, not just how fast it gets done. An AI that drafts property descriptions doesn't just speed up the description-writing step. It changes the agent's entire listing workflow: where they start, what they review, what they edit. That's a workflow change, and workflow changes face resistance proportional to the change's magnitude.

Variable output quality. Traditional features are deterministic. Click the export button, get a CSV. Every time. AI features are probabilistic. The AI-generated description is different every time. Sometimes brilliant. Sometimes mediocre. Sometimes wrong in ways that require domain expertise to catch. Users who encounter a bad output early develop a lasting scepticism that's hard to reverse.

These three factors compound. Users don't trust the AI (trust deficit), the AI requires them to change how they work (workflow disruption), and when they try it, the quality is inconsistent (variable output). One bad experience in that context, and they're done. They revert to the old workflow and never come back.

The co-design model

The approach that actually worked was co-design: building AI features in partnership with enterprise clients rather than building in isolation and presenting finished products.

At Cotality, our Tier 1 bank clients (CBA, NAB, ANZ) weren't just customers. They were co-design partners. We shared early concepts. We ran proof-of-concept sessions with their teams. We incorporated their compliance requirements into the governance framework before we wrote a line of code.

This is slower than "ship fast and iterate." It's also dramatically more effective for enterprise AI adoption.

Co-design builds trust before launch. When users have been involved in shaping the feature, they arrive at launch with context and ownership. They understand what the AI does, what it doesn't do, and why certain design decisions were made. They're advocates, not sceptics.

Co-design surfaces workflow integration requirements early. Enterprise users will tell you, in vivid detail, where a proposed AI feature conflicts with their existing workflow, their compliance requirements, or their team's operating rhythm. This feedback at the concept stage saves months of post-launch iteration.

Co-design validates governance requirements. In regulated environments (banking, insurance, financial services), AI features carry compliance risk. Co-designing with clients who face that risk means governance requirements are built in from the start, not retrofitted after legal raises a concern.

The result at Cotality was that AI features co-designed with enterprise partners consistently achieved 3x to 5x higher adoption rates than features shipped without co-design. Same product team. Same technical capability. The difference was process, not technology.

The incremental trust ladder

You cannot earn trust in a single leap. You earn it in small, verifiable steps.

Step 1: AI as suggestion, human as decider. The AI generates a draft. The human reviews, edits, and approves. The AI is an assistant, not an authority. This is where every enterprise AI feature should start. No exceptions. Even if the AI is 99% accurate, starting with human-in-the-loop builds the behavioural foundation for trust.

Step 2: AI as default, human as exception handler. After users have reviewed enough AI outputs to develop confidence, the default flips. The AI output is presumed correct unless the human flags an issue. This is a UX change, not a capability change. The AI was always producing the output. The difference is whether the user's default action is "review everything" or "review when something looks off."

Step 3: AI as autonomous, human as auditor. The AI executes without per-item human review. Humans audit samples, review aggregate quality metrics, and intervene when the system flags uncertainty. This is where the spot-check architecture becomes essential: the system needs to know when it's uncertain so it can route those cases to humans.

Most teams try to jump to Step 3 immediately because it's the most impressive in demos and the most compelling in ROI calculations. Users reject it because they haven't built the mental model for trusting the AI's judgment. The ladder matters. Each step earns the trust that makes the next step possible.

Measuring adoption that matters

If you're tracking AI feature adoption, here's what to measure:

Adoption rate: Percentage of eligible users who have used the feature at least once. This measures awareness and initial trial. Target: 50%+ within 90 days.

Retention rate: Of users who tried the feature, what percentage used it again in the following month? This measures whether the feature earned a second look. Target: 60%+. Below 40% means the first experience is failing.

Override rate: How often do users edit or reject the AI output? A 30% override rate might be healthy (users are engaged and refining). An 80% override rate means the AI isn't good enough for the use case. A 5% override rate in a high-stakes domain means users aren't checking, which is a different kind of risk.

Time-to-value: How long from first use to the user completing a real task with the AI feature? If the onboarding requires 20 minutes of configuration before the AI does anything useful, you've lost most users. Aim for value within 60 seconds.

Utilisation impact: Did the AI feature increase overall platform utilisation, or did it just create a new metric to track? An AI feature that 30% of users love but that doesn't move the MAU-to-seat ratio isn't solving the adoption problem. It's adding complexity.

The inline, not destination principle

The most consistent adoption pattern I've observed: AI features embedded in existing workflows outperform AI features that require users to navigate to a new destination.

An AI-generated property description that appears automatically in the listing creation flow (inline) will get 5x the usage of the same AI capability sitting behind a "Try AI Descriptions" button in the sidebar (destination). Same capability. Same quality. Different placement.

This connects directly to the architectural decisions in generative interfaces beyond chat. The form factor determines the adoption ceiling. If your AI feature lives in a separate tab, your adoption ceiling is the percentage of users willing to change their workflow to visit that tab. If your AI feature is woven into the existing workflow, your adoption ceiling is the percentage of users doing that workflow.

The uncomfortable truth

AI adoption is a product problem, not a training problem.

Most enterprise AI rollouts fail because of bad product design, not bad models. The model works. The feature works. The user doesn't care because the feature doesn't fit into their day. No amount of training, webinars, or email campaigns will fix a feature that was designed without regard for how the user actually works.

If your AI feature is sitting at single-digit adoption, the fix isn't more training. The fix is redesigning the feature to be inline instead of destination, earning trust through the incremental ladder, and measuring utilisation as a first-class metric instead of an afterthought.

The organisations that treat adoption as a product discipline, co-designing with users, measuring utilisation obsessively, and earning trust incrementally, will see their AI investments pay off. Everyone else will have impressive demos and empty dashboards.

Frequently Asked Questions

How do you co-design with enterprise clients without slowing down development?

Run co-design in parallel with development, not sequentially. Share concepts and wireframes at the 20% stage, working prototypes at the 60% stage, and near-final features at the 90% stage. The co-design feedback at 20% shapes direction. The feedback at 60% catches workflow conflicts. The feedback at 90% validates edge cases. Total elapsed time for co-design is 2 to 4 weeks per feature, running alongside engineering, not blocking it.

What if the enterprise client's feedback conflicts with your product vision?

Listen carefully, then distinguish between "this doesn't fit my workflow" (a product problem you should solve) and "I don't want AI" (a change management challenge you should address differently). Most enterprise client feedback is the former: specific, actionable, and based on real workflow constraints. Incorporate it. The rare "I don't want AI" feedback is better addressed by the trust ladder and demonstrable value than by argument.

How do you prevent co-design from becoming design-by-committee?

Set clear boundaries. The client provides input on workflow integration, compliance requirements, and output quality expectations. The product team retains authority over the AI architecture, the UX design, and the feature scope. Co-design is collaboration on requirements, not delegation of design decisions. One product lead with final authority prevents committee dynamics.

Logan Lincoln

Product executive and AI builder based in Brisbane, Australia. Nine years in regulated B2B SaaS, currently shipping production AI platforms. Written from experience enterprise AI at Cotality.