AI-Native Team Design

Why smaller AI-augmented teams outperform larger ones, the judgment-vs-patience framework for deciding what to delegate, and org patterns for the builder era.

TL;DR

- Revenue is decoupled from headcount. A single product builder with AI tools ships what used to take a team of five, so team design must optimise for judgment and taste rather than execution capacity.

- AI replaces patience-heavy work (processing, monitoring, formatting), not judgment-heavy work (prioritising, negotiating, designing strategy). Staff accordingly.

- Builder pods of two to three people with full autonomy, supported by platform teams and cross-cutting guilds, consistently outperform traditional PM-designer-engineer trios managed through layers of coordination.

The structural shift

For decades, scaling a product organisation meant one thing: hiring more people. More engineers meant more features. More PMs meant more roadmap coverage. Revenue growth tracked headcount growth because execution capacity was the binding constraint.

That coupling is breaking. AI coding assistants, agentic workflows, and prototype-grade tooling have collapsed the distance between "one person with an idea" and "working software in production." A product builder who prompts well, evaluates critically, and ships with taste can produce output that previously required a cross-functional team of five. Not a slide deck describing what to build. Working code.

The implication is uncomfortable for anyone who manages by headcount: adding people to an AI-augmented team often makes it slower. Each new person adds coordination cost, context switching, and communication overhead. In a traditional team, those costs were worth paying because the additional execution capacity more than compensated. When AI handles most of the execution, you're paying the coordination tax without getting the throughput dividend.

This doesn't mean teams shrink to zero. It means the composition changes. You need fewer people who execute instructions and more people who form clear intent, evaluate output, and make judgment calls under ambiguity. In the limit, an empowered team may be a single product builder with AI tooling, owning a problem end-to-end.

Judgment vs patience

Not all work responds to AI augmentation equally. The distinction that matters for team design is whether a task is patience-heavy or judgment-heavy.

Patience-heavy work is what humans can do but find tedious, repetitive, or time-consuming. Processing 500 invoices. Monitoring twelve dashboards for anomalies. Searching across forty documents for a specific clause. Formatting a report. Generating test cases from a spec. These tasks require attention and reliability, not original thinking. AI handles them faster, cheaper, and more consistently than humans.

Judgment-heavy work is what requires weighing competing values, navigating ambiguity, or making decisions with incomplete information. Choosing which market to enter. Deciding whether to fire a vendor. Negotiating a contract. Designing a pricing strategy. Setting quality standards. These tasks require context, experience, and the willingness to be wrong. AI can inform these decisions. It cannot make them.

The team design principle follows directly: automate patience, amplify judgment.

Every role in your organisation sits somewhere on the patience-to-judgment spectrum. Audit your team by asking, for each person, what percentage of their week is spent on patience work versus judgment work. If someone spends 80% of their time on patience-heavy tasks, that role is a candidate for AI augmentation or elimination. If someone spends 80% on judgment-heavy tasks, that person becomes more valuable in an AI-augmented team, not less.

The mistake most organisations make is applying AI uniformly. They give everyone the same tools and expect uniform productivity gains. The gains are wildly uneven. Roles heavy on patience work see 3x to 5x productivity increases. Roles heavy on judgment work see marginal improvements in efficiency but massive improvements in the quality of information available for decisions.

Three questions for your org chart

Take your current org chart and ask these three questions. The answers will tell you whether your structure is built for the AI era or anchored to the previous one.

Are you hiring for autonomy or capacity?

One senior product builder with AI tools and full decision-making authority will outperform three junior PMs who need approval at every step. The junior PMs generate coordination overhead: status meetings, review cycles, escalation paths. The senior builder ships.

This isn't a universal rule. Junior people still need to be developed, and mentorship still matters. But if your hiring plan is "we need three more PMs to cover all the roadmap items," you're hiring for capacity in an era where capacity is no longer the constraint. Hire one person who can own a problem end to end, give them AI tools and autonomy, and watch what happens.

Are your management layers killing speed?

Every management layer between the person closest to the problem and the person who can approve a decision adds latency. It also dilutes context. The manager hears a summary of what the PM heard from the customer, then passes a summary of that summary to the director.

In a traditional organisation, those layers existed because coordination was necessary. Ten teams working on related products need alignment. But coordination cost is now a competitive disadvantage. Your competitor with a flat team of three builders is shipping while your five-layer hierarchy is still scheduling the alignment meeting.

Count the layers between a customer insight and a shipped change. If the answer is more than two, your org chart is slowing you down.

Is taste your new technical debt?

When agents can generate anything, quality depends entirely on the person evaluating the output. If your team lacks taste (the ability to distinguish "technically correct" from "worth shipping"), you will produce high volumes of mediocre work at unprecedented speed. The product competency model defines what "taste" looks like at each proficiency level and how to develop it deliberately.

Taste compounds like technical debt, except in reverse. A team with strong taste makes better decisions on every feature, every sprint, every quarter. The gap between them and a team without taste widens exponentially. And unlike technical debt, you can't fix a taste deficit by throwing engineers at it. You fix it by hiring people with judgment and giving them the authority to use it.

The builder-team model

The traditional product trinity (PM, designer, engineer) assumed a clean separation of concerns. The PM defines what to build. The designer defines how it looks. The engineer builds it. Each role has a distinct skillset and a clear handoff point.

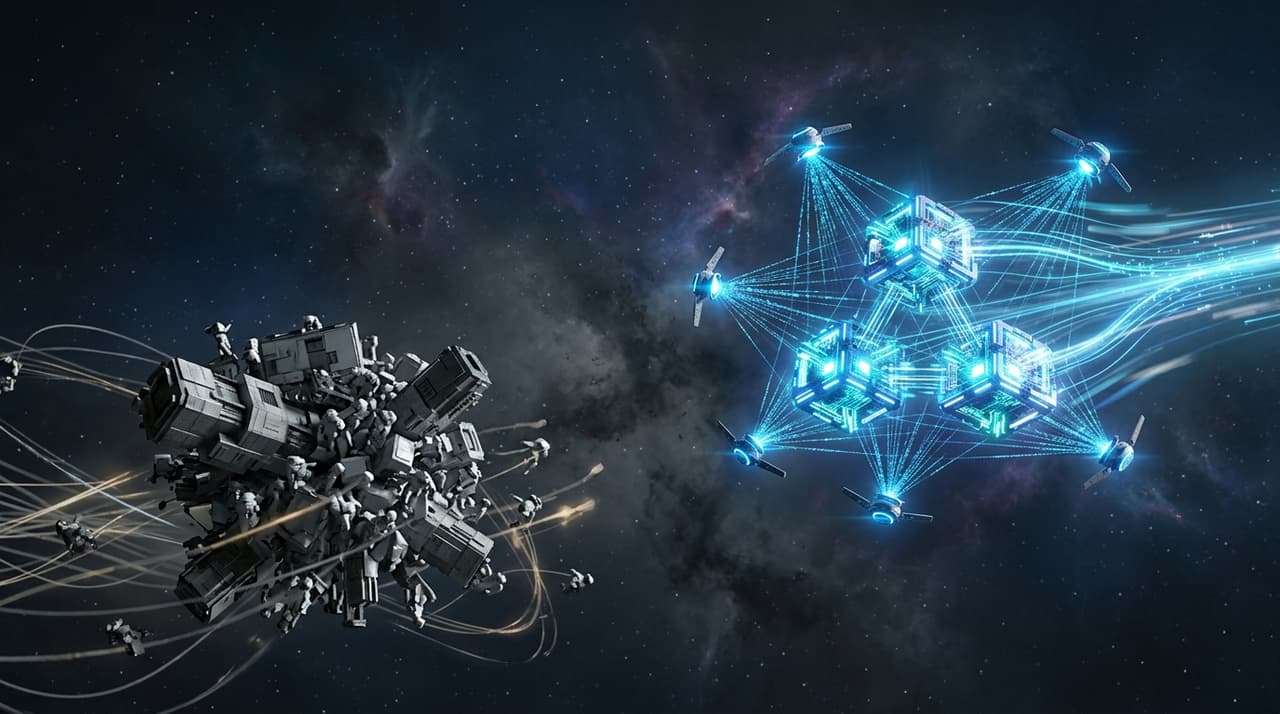

AI tooling is dissolving those boundaries.

A product builder who prototypes with AI coding tools is a "shadow engineer," producing working code without formal engineering training. A designer using generative tools can produce functional frontends, not just mockups. An engineer with AI assistance can run user research, write copy, and build analytics dashboards.

The role boundaries aren't gone. Deep engineering expertise still matters for production systems. Design craft still matters for complex interaction patterns. Product judgment still matters for strategy. What's changed is that the overlaps are larger and the handoffs are fewer.

Builder teams reflect this reality. Instead of three specialists with clean role boundaries and formal handoff processes, you have two or three generalists with deep expertise in one area and working competence across the others. They share context directly. They review each other's work in real time. They ship without waiting for the next team's sprint capacity.

Org patterns that work

Builder pods

Two to three people. Full ownership of a problem space. Direct access to customers. Authority to ship without external approval for changes within their domain.

Each pod member has a primary strength (engineering, design, product strategy) but operates across boundaries. The engineer reviews the PM's prototype. The PM writes eval criteria for the engineer's AI feature. Everyone talks to customers.

Pods work because they eliminate the coordination overhead that kills speed in larger teams. No handoff meetings. No cross-team dependency negotiations. No "we need to wait for Team X's sprint capacity." The pod owns the problem and the solution.

Platform teams serving pods

Builder pods need infrastructure they shouldn't build themselves: shared model routing, authentication, eval tooling, deployment pipelines, observability, cost monitoring.

Platform teams provide this. They're the internal service layer that makes pods productive. The relationship is explicit: platform teams exist to serve pods, not to gatekeep them. If a pod needs a new capability, the platform team's default answer is "yes, here's how," not "file a ticket."

Platform teams are the exception to the "small teams" principle. They can be larger because their work is more patience-heavy (infrastructure, tooling, reliability) and benefits from specialisation. But they should still be lean. Five to seven people covering shared infrastructure for ten pods is about right.

Guilds for cross-cutting concerns

Some problems span all pods but don't justify a dedicated team: AI governance, design system consistency, security practices, cost management, accessibility standards.

Guilds are lightweight communities of practice. One person from each pod participates. They meet fortnightly, share patterns, establish standards, and escalate issues that need org-wide decisions. The AI governance chapter describes one such guild in action: a standing governance working group that meets fortnightly to review high-risk AI features. Guilds have influence but not authority. They can't block a pod from shipping. They can flag concerns and recommend approaches.

The guild model works because it provides cross-cutting coordination without adding management layers. No guild leader who becomes a bottleneck. No approval process that adds latency. Just shared context and voluntary alignment.

The dissolution of the trinity

These patterns share a common thread: the PM-designer-engineer trinity is no longer the atomic unit of product development. The atomic unit is the builder, a person who can move fluidly across problem definition, prototyping, evaluation, and shipping.

Teams composed of builders don't need role-based handoffs. They need shared context, clear ownership boundaries, and the taste to know when something is ready to ship. The empowered teams chapter covers how to give these pods the authority and accountability structure that makes autonomy productive rather than chaotic.

The manager's responsibility for team fluency

Org design changes the structure. Fluency development changes what the structure can do.

These are different work, and most discussions of AI-native org design conflate them. You can build builder pods, flatten your hierarchy, and give teams autonomy — and still have a team that's stuck in low-leverage AI usage because nobody made it their job to move the team's floor.

The failure mode is a manager who is personally capable with AI but whose team is still doing most of the same work the same way. This is common. It happens because personal AI fluency develops privately — one person's workflows improve — and it doesn't automatically transfer to anyone else. The manager assumes fluency is contagious. It isn't.

What actually moves a team isn't tool availability or training sessions. It's the same conditions that produce any other kind of team capability development.

Explicit expectations. "I expect everyone on this team to be actively developing their AI fluency" is a different kind of statement than "we've got AI tools available to use." The first is a performance expectation with observable outcomes. The second is an open invitation that most people won't take up unprompted.

Protected time for improvement. If the sprint is entirely full of delivery work, nobody will invest in building repeatable systems or redesigning workflows. Stage 2 fluency (building things others depend on) takes time that has to come from somewhere. If it's never allocated, it doesn't happen.

Sharing what works. The manager who documents their own AI methods, shares prompt libraries, and openly iterates their workflows in front of the team is doing something categorically different from the manager who uses AI quietly. The first shows what progression looks like. The second creates no signal.

Active unblocking. When team members are capable of redesigning significant workflows but aren't doing it, the question is why. Usually it's one of three things: they don't have permission to change processes other people use, the disruption risk feels too high without explicit backing, or nobody's made it a priority over delivery. The manager's job is to remove those blockers, not wait for team members to self-organise around them.

A manager who builds their own AI fluency without investing in the team's isn't leading. They're doing individual development and calling it leadership. The AI fluency spectrum article covers this in more detail: what it means to move from personal AI usage to systems that benefit the whole team.

What not to do

Don't restructure all at once. Org changes are disruptive. Start with one builder pod on a contained problem. Measure their output against a traditional team working on a comparable problem. Let the results make the argument for you.

Don't confuse "fewer people" with "cheaper." Builder pods require senior people with broad skills and strong judgment. Those people cost more individually. The savings come from eliminating coordination overhead and shipping faster, not from paying less per person.

Don't strip out all specialists. Deep expertise still matters. The question is whether specialists sit inside every team or whether they serve multiple pods through a platform team or guild model. Production-grade security, data engineering, and ML infrastructure need genuine specialists. They just don't need to be embedded in every pod.

Don't ignore the humans. Restructuring teams means changing people's roles, reporting lines, and identities. A PM who's been told their value is in writing specs now hears that value has moved to building prototypes. Handle the transition with honesty and support, or you'll lose good people to fear rather than performance.

Don't adopt the model without the tooling. Builder pods only work when each member has access to AI coding tools, prototyping environments, eval frameworks, and direct deployment capability. Asking people to operate as builders while gating them behind legacy tooling and approval processes guarantees failure.

Behaviour table

| Behaviour | Traditional org | AI-native org |

|---|---|---|

| Scaling output | Hire more people | Augment existing people with AI tools; add headcount only for net-new judgment |

| Team composition | PM + designer + engineer as fixed trinity | Builder pods of 2–3 generalists with deep primary skills |

| Role boundaries | Clean separation, formal handoffs | Fluid boundaries, shared context, overlapping capabilities |

| Management layers | Directors, group PMs, senior PMs, PMs | Pod leads with direct access to leadership; minimal hierarchy |

| Speed bottleneck | Engineering capacity | Judgment and taste |

| Quality assurance | Review processes and approval gates | Taste embedded in every builder; eval frameworks over approval chains |

| Cross-cutting concerns | Dedicated teams with authority to block | Lightweight guilds with influence, not authority |

Anti-pattern: the headcount reflex

The product portfolio is growing. Leadership looks at the roadmap, counts the initiatives, divides by the number of PMs, and concludes they need to hire four more.

Nobody asks whether the existing team could cover twice the roadmap with better tooling and fewer coordination meetings. Nobody audits how much of each PM's week is spent on patience work that AI could handle. Nobody questions whether the management layers between the customer and the shipped feature are adding value or adding latency.

So the company hires four more PMs. Each one needs onboarding, context, and a manager. The manager needs a manager. Meetings multiply. Alignment becomes a full-time job. Six months later, the team is larger but not faster. Often slower.

The headcount reflex is the assumption that more people equals more output. It was roughly true when execution capacity was the binding constraint. It is actively false when AI handles execution and the constraint is judgment. Four new PMs with mediocre judgment and no builder skills will produce four times the volume of mediocre work. One senior builder with taste and autonomy will ship the thing that actually matters.

Before you open a headcount req, ask: could an existing builder with better tools and fewer meetings do this work? If the answer is yes, the problem isn't headcount. It's how you've organised the headcount you already have. Constraint is the adoption strategy: teams deliberately under-resourced with people and over-resourced with AI tools consistently produce the best AI-native work, because the constraint forces them to automate instead of hire.

v2.1 · Updated Apr 2026