Micro-Optimisation Is a Trap When Your Market Is Exponential

TL;DR

- In linear markets (product value grows 30–50% over two years), micro-optimisations capture a meaningful share of available value. In exponential markets (100–1000x growth), they capture a rounding error.

- Most growth teams are running a playbook designed for linear markets. The right allocation in an exponential market inverts: 70% big bets, 30% incremental.

- The diagnostic that matters isn't "are we growing?" It's "is the total addressable value of our product expanding faster than our ability to capture it?"

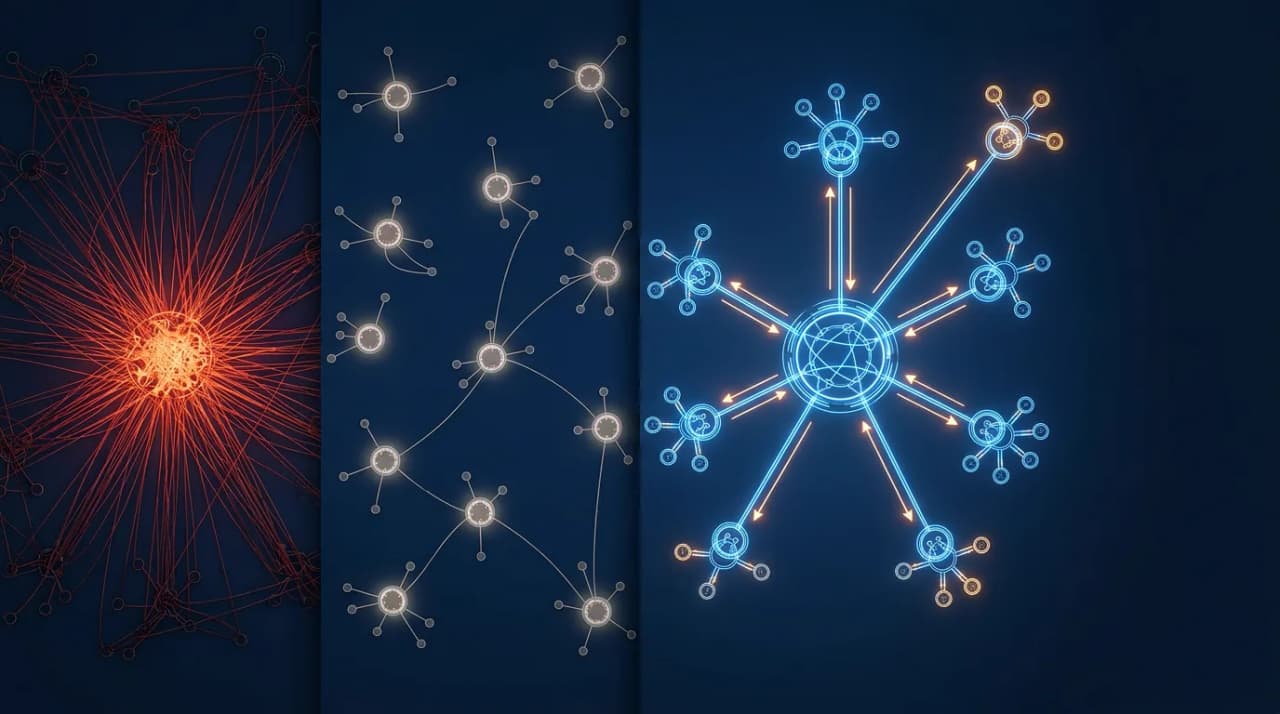

Most growth teams allocate 60–70% of effort to small experiments and 30–40% to big bets. That split is correct for linear markets where product value grows 30–50% over two years. It's catastrophically wrong for exponential markets where value may grow 100–1000x.

Every growth team I've worked with follows this same pattern. Button colour tests, copy changes, funnel optimisations, pricing page tweaks. The remaining capacity goes to new features, new markets, new product lines.

The difference between these two market types isn't academic. It determines whether your growth team is capturing value or rearranging deck chairs on a ship that's accelerating without them.

Why does micro-optimisation work in linear markets?

Most SaaS businesses operate in linear markets. A project management tool's value proposition improves 30–50% over two years through better integrations, polish, and incremental feature additions. A food delivery app gets marginally better at matching drivers and predicting demand.

In these markets, the total pie grows slowly. Your share of that pie is the thing you can control. Micro-optimisations work because a 3% improvement in sign-up conversion or a 5% lift in activation rate compounds into real revenue over quarters. The pie isn't getting much bigger, so capturing a larger slice of the existing pie is the rational strategy.

Growth teams at Atlassian, Spotify, and DoorDash refined this playbook over a decade. They got very good at it. The experimentation frameworks and statistical rigour behind those wins evolved for a world where product value grows at roughly the same rate as the market.

That playbook is muscle memory for most of the industry. It feels responsible. It produces visible results on dashboards. It's easy to staff and easy to measure.

And it's wrong for what's happening now.

Why does micro-optimisation fail in exponential markets?

AI products don't live in linear markets. The total value a foundation model can deliver to users is growing at a rate that has no precedent in SaaS history.

Consider the math. Eighteen months ago, AI coding assistance meant autocomplete and simple code suggestions. Today, agentic coding tools can architect features, write tests, refactor entire modules, and manage multi-file changes autonomously. The total value of an AI coding product didn't grow 30%. It grew by an order of magnitude. Agentic coding is now a larger market than the entire AI coding market was when it started.

If your product's total addressable value will be 100x to 1,000x greater in two years than it is today, what does a 3% conversion lift on your current sign-up page actually capture?

A rounding error.

Not a small win that compounds. A literal rounding error relative to the value that's about to exist. The team that spent a quarter perfecting their pricing page missed the fact that an entirely new use case opened up and is now bigger than their current product.

Property data is a useful case study. For years, growth teams in that space ran conventional motions: optimising conversion funnels, testing pricing tiers, improving onboarding flows. Those optimisations worked. They produced measurable quarterly gains. Then AI shifted the underlying value proposition from "look up a number" to "predict outcomes, automate valuations, and generate insights across portfolios." The total addressable value of the product category expanded dramatically. The teams that had spent the quarter optimising an existing funnel were now running experiments on a surface that represented a shrinking fraction of where the value was heading.

How should resource allocation change in an exponential market?

In a linear market, the conventional split makes sense: 60–70% incremental, 30–40% big bets.

In an exponential market, invert it. 70% on larger bets. 30% on incremental.

The reasoning is straightforward. When the total pie is growing at 10x year over year, the marginal return on capturing a slightly larger slice of today's pie is dwarfed by the return on positioning yourself to capture even a small slice of tomorrow's pie.

Larger bets in this context means:

- New market creation. If a capability that didn't exist six months ago now enables an entirely new use case, that's where the team should be spending most of its energy. Not polishing the existing use case.

- Product expansion into adjacent value. The chrome extension that enables a new interaction surface. The API integration that opens a new buyer persona. The feature that makes the product relevant to a user segment that didn't need it before.

- Infrastructure for future capabilities. Building the scaffolding that lets the product absorb the next model improvement without a redesign. This is a bet on the exponential curve continuing, and if you're in an AI market, that's a bet worth making.

This doesn't mean stopping all incremental work. The 30% allocation for small experiments still matters. Broken funnels still need fixing. Obvious friction still needs removing. The difference is that incremental work becomes maintenance, not strategy. And bigger bets require more PM bandwidth, which is already the scarcest resource on AI-amplified teams.

How to know if your market is exponential

Not every market affected by AI is exponential. Some are linear markets with AI bolted on. The diagnostic:

Is the total addressable value of your product expanding faster than your ability to capture it? If yes, you're in an exponential market. If no, you're in a linear market with AI features.

Three signals that suggest exponential dynamics:

- New use cases are emerging that didn't exist a year ago. Not improvements to existing use cases, but entirely new things people can do with your product category. AI coding went from autocomplete to agentic architecture in 18 months. That's exponential.

- The value of what you deliver changes with each model generation. If a new foundation model release doesn't meaningfully change what your product can do, you're probably in a linear market. If each model release unlocks capabilities that your customers immediately want, you're riding the exponential curve.

- Your competition is coming from categories that didn't previously compete with you. When the market is expanding, competitors show up from adjacent spaces. If your competitors are the same companies doing roughly the same things with AI sprinkled on top, the market is linear.

Why is Wall Street making the same mistake?

Wall Street is making the same error at the macro level. Financial models treat AI infrastructure spending as a fixed-pie, zero-sum market. Company A gains share, Company B loses share, total market stays roughly constant.

That framing ignores the consumption explosion that has accompanied every major platform shift. PCs, cloud computing, mobile, SaaS: each one looked like a finite market until usage patterns expanded by orders of magnitude. People thought the server market was a fixed number of units until cloud consumption made the old market look like a rounding error. Salesforce turned a $2 billion annual CRM market into something dramatically larger by changing the consumption model.

The same pattern is playing out with AI infrastructure. Every company running AI workloads is consuming more compute than their models predicted. The market isn't being divided. It's expanding.

For product leaders, the lesson is the same at the company level as it is at the portfolio level: if you're allocating resources as though the opportunity is fixed, you're optimising for today's pie while tomorrow's pie grows without you.

Run the diagnostic on your own team

Pull up your growth team's experiment backlog. Categorise each item as either incremental (improves an existing metric on an existing surface) or expansive (creates new value, reaches new users, or captures a capability that didn't exist six months ago).

If your market is exponential and more than half your backlog is incremental, you're running the wrong playbook.

The fix isn't to stop being rigorous. It's to apply that rigour to the right problems. Test your way into new markets with the same discipline you'd apply to a pricing page experiment. Run the same statistical frameworks on your big bets that you run on your button colour tests. The methodology transfers. The allocation of effort is what needs to change.

Micro-optimisation isn't wrong. It's misapplied when the market underneath you is moving faster than your experiments can measure.

Frequently Asked Questions

What if I'm wrong about my market being exponential?

If you invest 70% in big bets and the market turns out to be linear, you'll over-invest in exploration and under-invest in optimisation. That's recoverable. The opposite mistake, treating an exponential market as linear, is much harder to recover from because the window for capturing new value passes quickly.

How do I convince leadership to shift allocation?

Frame it in terms of opportunity cost, not risk. Show the total addressable value curve and ask: "What percentage of this expanding value does our current experiment backlog capture?" If the answer is small, the case makes itself.

Does this apply to non-AI markets?

Yes, any time a market undergoes a platform shift that expands total addressable value. Cloud computing in the early 2010s, mobile in the late 2000s, and now AI. The pattern is the same: linear playbooks underperform during exponential expansions.

Related: Build for the Model That Doesn't Exist Yet and Your Killer Feature Will Be Cloned by Friday. Build a Moat.

Logan Lincoln

Product executive and AI builder based in Brisbane, Australia. Nine years in regulated B2B SaaS, currently shipping production AI platforms. Written from experience shipping AI products.